Update Report: building host with hardened-debian-xfce

Building with

sudo ./whonix_build --flavor hardened-debian-xfce --target qcow2 --build

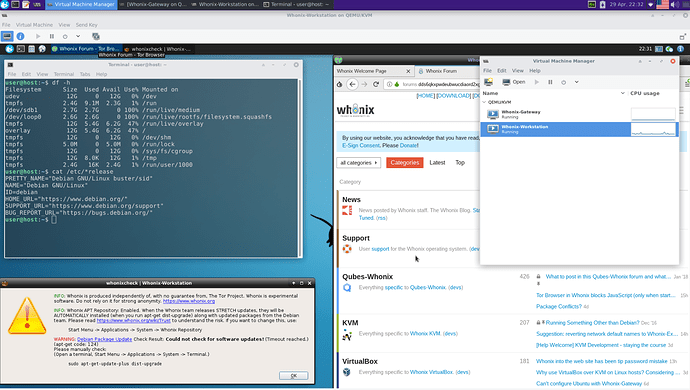

went successful. On first boot, I realized there was not /etc/apt/sources.list file, so I created one with debian buster repos myself. Probably caused by not adding the --redistribute flag during build?

Anyway, after successful build, I did a quick and dirty bash script which mounts the hardened-debian raw and installs the following packages:

qemu-kvm libvirt-daemon-system libvirt-clients virt-manager

Then, and while still in chroot and a (dirty) scripted way, I configured the Whonix network and VM following official documentation

chroot $HARDENED_CHROOT/chroot addgroup user libvirt

chroot $HARDENED_CHROOT/chroot addgroup user kvm

cp *.xml $HARDENED_CHROOT/chroot/tmp/

chroot $HARDENED_CHROOT/chroot service libvirtd restart

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system net-autostart default

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system net-start default

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system net-define tmp/Whonix_external_network-15.0.0.0.9.xml

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system net-define tmp/Whonix_internal_network-15.0.0.0.9.xml

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system net-autostart external

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system net-start external

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system net-autostart internal

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system net-start internal

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system define tmp/Whonix-Gateway-XFCE-15.0.0.0.9.xml

chroot $HARDENED_CHROOT/chroot virsh -c qemu:///system define tmp/Whonix-Workstation-XFCE-15.0.0.0.9.xml

You were right, I was able to configure the network using the .xml files after running service libvirtd restart in chroot (see above).

However, configuration of VM does not work:

error: Failed to define domain from tmp/Whonix-Workstation-XFCE-15.0.0.0.9.xml

error: invalid argument: could not find capabilities for domaintype=kvm

The thing is, that I am running all the build and above commands inside a debian buster VM. Maybe this causes the could not find capabilities for domaintype=kvm error? Is there any known workaround to force the domain definition?

EDIT: I mounted the .raw VM file directly on my host and it did not work either. Somehow the chroot environment does not “believe” that it has kvm capabilities, although the host pseudo-filesystems are binded to it:

mount --bind /dev chroot/dev

mount --bind /proc chroot/proc

mount --bind /dev/pts chroot/dev/pts

Currently looking online for a solution, nothing found yet.