Another way to considerably speed up the Whonix.org website could be to switch away from the standard HTTP 1.1 protocol in exchange for HTTP/2 or SPDY.

Standard HTTP 1.1 works by requesting and fetching the HTML page. Then the browser reads the HTML page, and has to request and fetch all the other page assets (CSS, JavaScript, Images, Fonts, etc). So for a single webpage, there can be dozens of separate requests out to servers for downloading page assets that need to be fulfilled. This really slows total page load times.

HTTP/2 and SPDY which is being deprecated in exchange for the new HTTP/2 over the next year work differently by having the server first fetch all of the page assets and send everything to the user’s browser in one single fetch (multiplexing). As well as using compression and prioritization schemes. So one single page download, instead of in many separate requests.

I’ve heard and seen that such HTTP multiplexing protocols can considerably speed up webpage load times.

See this following demo site that compares HTTP vs. HTTPS with SPDY…

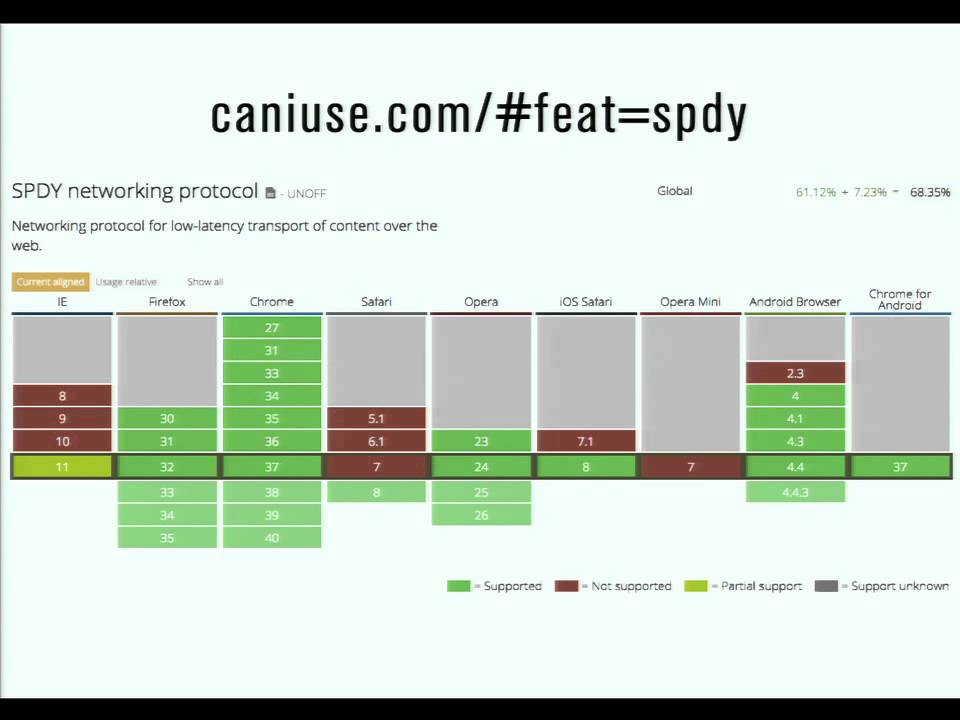

Some decent overview, timeframe, and browser support info in the Wikipedia articles…

I’m not sure if all the necessary compatibility factors match up quite yet, so that would have to be considered. But I just wanted to give a heads up that HTTP/2 is likely to be a strong solution for additionally speeding up the Whonix.org website in the upcoming future.

And, as a bonus, a potentially a good thing for overall web anonymity that may make fingerprinting HTTPS webpages by netflows a bit harder.